Many of the physical systems we encounter in our daily lives are influenced by human behaviour and therefore incredibly complex to describe mathematically. This is, for example, the reason we know so little about the Earth’s climate system, making climate forecasting a very risky business. A perfect model for our climate, that could be used to predict its variations with high accuracy, would require knowledge of an absurd number of properties and variables. These factors include the behaviour of natural heat sources (particularly solar and geothermal), the composition of the atmosphere (particularly humidity and clouds) as well as the gradients of pressure and temperature at each location and at each moment in time.

Common practice in solving such complex problems is to split the system into constituents (specialisation) and then split the constituents once again into their smaller parts (super specialisation) and separately model those smaller parts in great detail. In science, the latter is often done with the use of differential equations, leading to finite-difference algorithms. However, one cannot expect all these narrow packages of knowledge to give insight into the workings of the overall system. Looking again at the Earth’s climate system, we cannot treat the dynamic properties of the Earth (rotation around the sun, rotation around its axis), of the natural heat systems, of the oceans, of the atmosphere (with its convection and radiation processes) as separate constituents and then bring them together later on. System behaviour is not only determined by the behaviour of the constituent elements, but also by the (generally nonlinear) interaction between these elements. A complex system is full of feedback loops with positive and negative feedback. Science urgently needs knowledge which encompasses true integration of all those specialisms. In such an integration endeavour, data-driven, nonlinear imaging is indispensable.

A traditional modelling algorithm may be scientifically fully correct, but if the underlying assumptions are no good then the output has no practical value.

Professor Guus Berkhout at the Delft University of Technology (TU Delft) believes that for highly complex systems another approach is required. Instead of working with small-scale, parameter-rich differential equation models, it is better to describe these complex systems with large-scale, parameter-poor integral equation models. By doing this, we don’t start with assumptions and forward modelling but we start with measurements and forward imaging. Bear in mind that in traditional scientific modelling, detail in the output comes from the information in the assumptions. However, in the imaging approach, detail in the output comes from the information in the measurements.

In conclusion, if we deal with a highly complex system, measurements not assumptions should lead the way. The history of science tells us over and over again that new scientific insights always originate from improved measurements. In this issue of Research Features, Professor Berkhout shows how this is done for the Earth’s geological system in all its complexity.

We can never achieve a big step forward if we stay within the same concept.

Imaging provides a snapshot that reveals the internal state of a system at a particular moment in time. The higher the quality of measurement, the higher the image resolution. Time-lapse imaging shows the system’s dynamic behaviour.

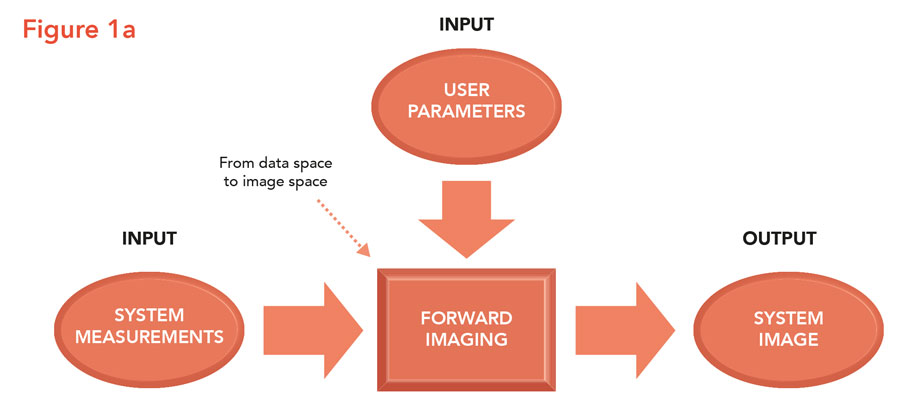

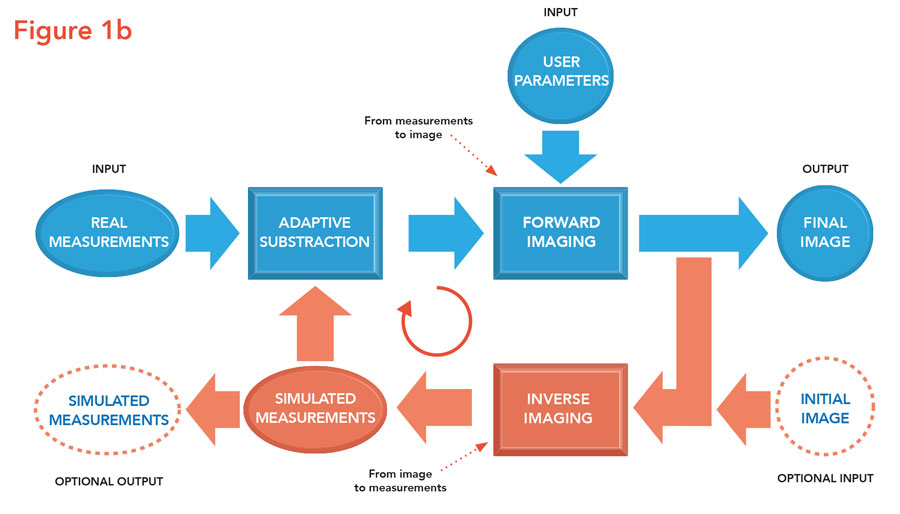

Forward and inverse imaging

Traditionally, imaging is an open-loop process. System measurements are input to the imaging algorithm and a system image is produced (see Figure 1a). Related examples from real life are acoustic imaging in health care (echo scans), in marine imaging (sonar scans), in geo imaging (seismic scans) or in atmosphere imaging (radar scans). Unlike traditional modelling, the user only needs to provide a few imaging parameters such as effective propagation velocities. However, for high-resolution imaging something extra is required. A feedback loop must be included, leading to a closed-loop imaging process. In this iterative process the imaging result is fed to an inverse imaging algorithm that transforms the image back into measurements (Figure 1b). These numerical measurements are compared with the true measurements and next the difference is imaged, leading to a residual image that is used to update the existing image. In addition, by analysing the residual image the imaging parameters can be updated and refined. This procedure is repeated until the residual is sufficiently small (‘iterative imaging’). In practice, the industry generally deals with sparse measurements and iterative imaging is a must.

Inverse imaging is the counterpart of forward imaging; it transforms the image space back into the data space. The data residuals provide information on how to improve the image, leading to an iterative imaging process.

Seismic imaging circle

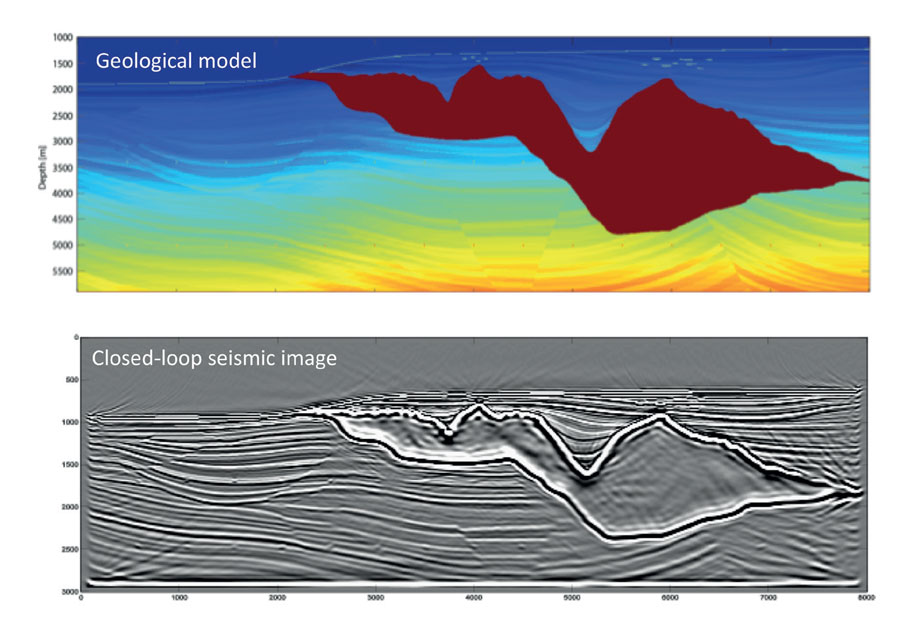

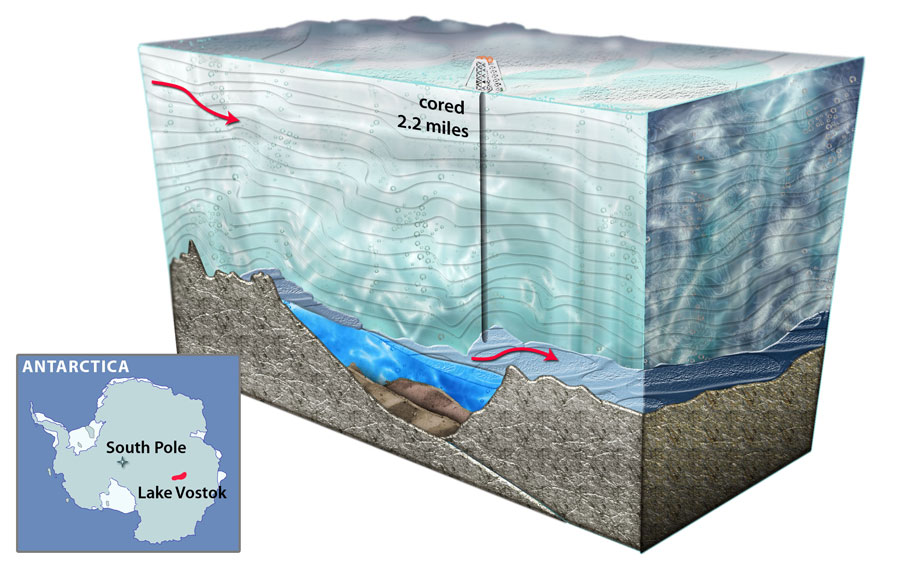

The geo-energy industry relies on accurate knowledge of the Earth’s geological system. Inaccuracies could cause painful business mistakes with big financial consequences. In this industry a prime tool is seismic imaging, where seismic sources are manmade. In seismic data acquisition these seismic sources – situated at and/or below the Earth’s surface – generate elastic wavefields inside the Earth and the wavefield responses are recorded by many thousands of detectors. By continuously moving the entire acquisition system, a large measurement area is covered. The recorded measurements (up to several terabytes of data) are fed into the iterative seismic imaging algorithm as described above. But there is more. Imaging results are also translated to the detailed rock properties of the geological layers. By traditional forward modelling, these rock properties are transformed into numerical measurements for validation purposes, leading to iterative inversion. All of this, iterative imaging and iterative inversion, is simultaneously done in one circular acquisition – imaging – inversion process (‘circular imaging’). Figure 2 shows the ‘Seismic Imaging Circle’. The final result provides validated snapshots of the Earth’s subsurface in terms of elastic properties of the geological boundaries (shape and reflectivity) and elastic properties of the geological layers (velocity and density). Note from Figure 2 that the measurement system is an integral part of the imaging circle. It allows two validation processes, i.e., by inverse imaging (image validation) on the right-hand side and by forward modelling (property validation) on the left-hand side. Large residuals show that something is wrong either with the imaging process and/or with the modelling process.

The imaging circle connects the traditional modelling community with the traditional imaging community and extends both approaches to an iterative version.

From linear to nonlinear imaging

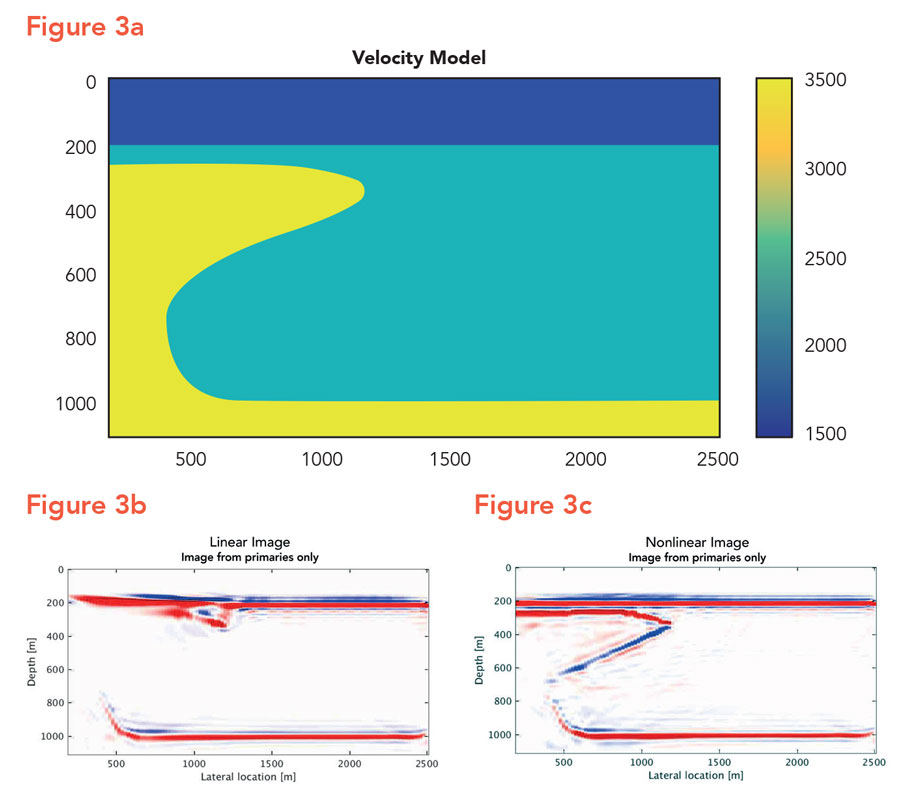

Multiple scattering events (‘multiples’), consisting of higher-order reflections (which are reflected more than once), have always played a vital role in seismic imaging. Traditionally, they are considered unwanted events (referred to as ‘source-generated noise’) that interfere with – or may even overshadow – the first-order or primary reflections (reflected only once). In the past 50 years enormous investments have been made to remove multiples, making the seismic reflection response linear in reflectivity and, therefore, simplifying the imaging algorithm significantly (linear imaging). However, in the late nineties Professor Berkhout brought to the attention of the imaging community that this ‘noise reduction’ approach for the creation of linearised datasets was not only a very complex procedure but also reduced the amount of information extracted from the measurements. Instead, he proposed that, not only should the higher-order reflections be left in the data sets, but that they should be exploited to allow imaging of geological zones that could not be illuminated by a linearised approach (‘linear illumination gap’). The principle is illustrated in Figure 3. Figure 3a shows a subsurface model that is illuminated by manmade surface sources at the right-hand side only. Figure 3b shows the linear image and Figure 3c the nonlinear image. Note the contribution of the higher-order reflections.

The more complex the system, the more scientists should rely on measurements.

Professor Berkhout’s multiple scattering approach is particularly critical for seismic imaging because in this application perfect data rarely exist. Most of the measured data, represented in data matrices – used as inputs for the algorithms – are known as sparse matrices where most of the elements are zero. However, by including the multiple scattering events in the data matrix, the extended algorithm uses the extra information to fill in the linear gaps, leading to nonlinear imaging.

Science and industry

Driven by his ambition to develop high-resolution imaging algorithms that are used in real life, Professor Berkhout established a science–industry consortium at TU Delft in 1982, initially involving five companies. It quickly became apparent though, due to the relevance of the scientific problems his team was tackling, that more companies wanted to join. Today, the Delphi consortium consists of 30 international companies, all active in the geo-energy industry and utilising the Delphi results for improving their business.

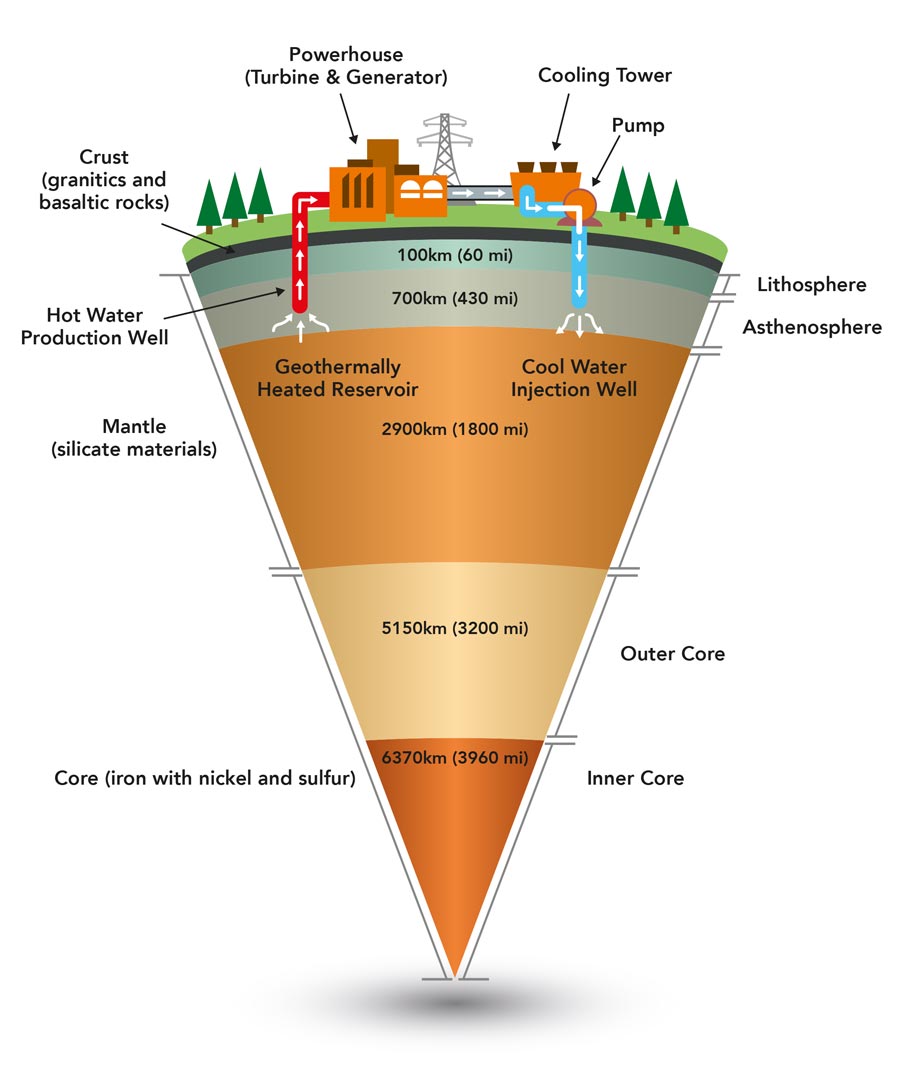

The Delphi research is not only important for the geo-energy industry. Better understanding of the complex dynamic processes in the Earth is also of significant importance for society as a whole. Consider the utilisation of clean geothermal energy, or safe underground storage, or the historical archive hidden in geological layering. This archive is invaluable to reveal the continuously changing natural environment on planet Earth over millions of years. It is expected that the geo-sciences will play a key role in climate change research, revealing historical changes in the Earth’s climate.

We should not shape the measurements to fit our algorithms, but we should shape our algorithms to fit the measurements.

In conclusion, the Delphi team (ca. 25 researchers, from PhD to professorial level) does not look at the problems of one company, but it looks at the needs and opportunities of an entire industrial sector. Hence, Delphi has to concentrate on issues that are important for all membership companies. This means that the research program should focus on solutions that are of great importance for the entire sector. And above all, the Delphi research must provide solutions that are game changing. If not, members leave.

The tradition in seismic imaging is shaping the data to the algorithms by linearising the measurements. However, we should not shape the data to fit our algorithms, but we should shape our algorithms to fit the data. That is what nonlinear imaging is doing.

How long do these nonlinear imaging algorithms take to run?

The costs of running the nonlinear algorithms depend on the number of iterations (typically less than ten). However, bear in mind that the costs of running imaging algorithms are very low with respect to acquiring the input data. In addition, high-resolution snapshots are indispensable in the geo-industry’s decision-making process for large investments.

Do you think this type of modelling will be more commonly used?

Yes, the geo-industry cannot afford to stay with the traditional linear approach. Today’s targets are situated below complex geological structures and linearisation often generates big illumination gaps. More important, nonlinear imaging is part of the ‘Nonlinear Imaging Circle’, connecting the different scientific worlds of modelling and imaging. This Circle is a game-changing scientific concept for the industry.

What has made your collaboration with industry so successful?

It is most inspiring to observe the technological advances in an industrial sector and to derive from these advances the sector’s long-term needs. The biggest mistake professors can make is to ask companies what they can do for them. Successful collaboration requires the opposite, as companies expect from a professor to tell them the scientific opportunities that are critical to their future success. My advice is that university professors should never carry out contract research for a single company.

What other scientific problems do you want to extend your imaging approach to?

Climate imaging is on the top of my list. In this highly complex global problem traditional forward modelling is too simple. New opportunities arise by applying the ‘Nonlinear Imaging Circle’, which connects forward and inverse modelling with forward and inverse imaging in an iterative manner. Circular imaging may also become a game changer in the climate world.

References

- AJ Berkhout. [online] Guus Berkhout. Available at: http://www.aj-berkhout.com/

[accessed August 2018] - Guus Berkhout. [online] Wikipedia. Available at: https://en.wikipedia.org/wiki/Guus_Berkhout [accessed August 2018]

Professor Guus Berkhout from the Delft University of Technology proposes a data-driven, nonlinear imaging concept (‘circular imaging’) to generate high-resolution snapshots of the Earth’s geological system.

Funding

The author is grateful to the Delphi Consortium Members for their continuing financial support and for the stimulating discussions on long-term industry needs during the Delphi meetings.

Collaborators

Dr Eric Verschuur and Dr Gerrit Blacquiere, the current directors of Delphi.

Bio

Guus Berkhout started his career with Shell Research in 1964, where he held several international positions. In 1976 he accepted a Chair at Delft University of Technology in the field of geophysical and acoustical imaging. During 1998 – 2001 he served as a member of the University Board. In 2001 he also accepted a chair in the field of system innovation. He is founder of the Delphi Science-Industry Consortium, which is financed by thirty international geo-energy companies.

Guus Berkhout started his career with Shell Research in 1964, where he held several international positions. In 1976 he accepted a Chair at Delft University of Technology in the field of geophysical and acoustical imaging. During 1998 – 2001 he served as a member of the University Board. In 2001 he also accepted a chair in the field of system innovation. He is founder of the Delphi Science-Industry Consortium, which is financed by thirty international geo-energy companies.

Contact

Professor v Berkhout

Delphi Science-Industry Consortium

Delft University of Technology,

Mekelweg 5, 2628 CD Delft,

Netherlands

E: guus.berkhout@delphi-consortium.com

T: +31 70 358 5277

W: http://delphi-consortium.com/