- Consciousness is a concept with a history of long-standing debate among scientists.

- Researchers are yet to connect the brain’s physical processes with the phenomenon of consciousness.

- Miss Hana Hebishima, Miss Mina Arakaki, and Professor Shin-ichi Inage at Fukuoka University, Japan, have developed a new model of consciousness – the Human Language-based Consciousness or HLbC model.

- Applying their model to psychological consciousness and psychological time, the researchers propose answers to philosophical questions relating to consciousness.

We usually think of consciousness in terms of the subjective inner states experienced by humans, such as emotion, thought, perception, and self-awareness. Scientists from a variety of academic disciplines, from psychology to philosophy, neuroscience to mathematics, have been debating how these conscious phenomena arise in the brain. Neuroscientists have investigated the relationship between conscious phenomena and neural circuit activity in the brain, but have yet to get a good grasp of the matter.

Consciousness poses many problems and is the cause for many religious and philosophical debates. For instance, physicalists, who believe that all mental phenomena are ultimately physical phenomena, suggest that consciousness arises from material brain activity. In contrast, dualists believe in two opposed powers – the good and bad – and advocate that consciousness is a supernatural phenomenon separate from brain activity. On the other hand, panpsychists, who believe that all things have a mind or a mind-like quality, speculate that consciousness comes from a ‘universal field of consciousness’.

At Fukuoka University in Japan, Miss Hana Hebishima, Miss Mina Arakaki, and Professor Shin-ichi Inage are taking a different view – they propose a new model of consciousness, based on mathematical formulation.

Hard problem of consciousness and inverted qualia

The question of the hour is – how does consciousness arise from the brain? This scientifically challenging problem of explaining how the physical processes in the brain relate to the subjective experience of consciousness, is called the hard problem of consciousness. Research is gradually revealing how the brain’s neural circuits process visual information, but how this brain activity produces the subjective experience of vision is yet to be fully explained. Thus, a knowledge gap exists in connecting the brain’s physical processes with the phenomenon of consciousness.

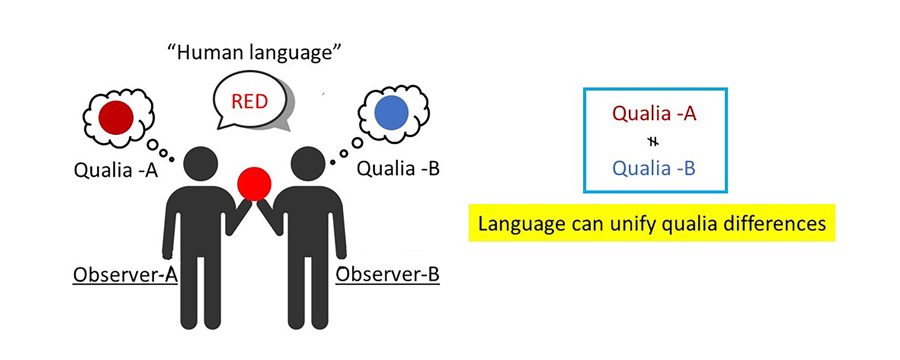

One might then wonder – what causes two people to have different subjective experiences, or qualia, when exposed to the same stimulus? For example, one person looks at a red apple and their brain’s neural pathways react to the stimuli making them feel ‘red’. Another person who looks at the same red apple might have different neural pathways and not feel ‘red’, but instead associate the apple’s colour with a different sensation. This phenomenon is called inverted qualia. The problem of inverted qualia will have to be solved first, so that the hard problem of consciousness can be resolved and the relationship between physical brain processes and subjective experiences can be explained.

A knowledge gap exists in connecting the brain’s physical processes with the phenomenon of consciousness.

Hebishima, Arakaki, and Inage have developed a new model of consciousness – the Human Language-based Consciousness or HLbC model. They demonstrate inverted qualia mathematically using computational models with artificial intelligence and show how colour blindness and inverted qualia can occur. The researchers define second-person consciousness, including qualia, and investigate how to create models of consciousness based on mathematically definable human languages by leveraging previously established models that we will now explore.

Philosophical zombie and integrated information theory

The philosophical zombie results from a thought experiment designed to offset the perceptions of consciousness and physicalism of qualia. Physically, philosophical zombies are identical to humans, but they lack consciousness. Hebishima, Arakaki, and Inage explain that philosophers hope to gain insight into what consciousness is by assuming that philosophical zombies exist.

Integrated Information Theory (IIT) is one theory proposed by Giulio Tononi, a neuroscientist and psychiatrist, with the aim of elucidating the origin and essence of consciousness. It states that consciousness exists in and of itself, being a fundamental and realistic aspect of the universe, and that it is entirely subjective. In other words, it starts from the perspective of the conscious entity itself. While this theory continues to be debated, it is regarded as the most important framework for gaining insights into the nature of consciousness.

Physicalist monism of consciousness and dualism of consciousness

Physicalist monism of consciousness assumes that consciousness results from physical processes, therefore suggesting that brain activity generates consciousness. In contrast, dualism of consciousness suggests that physical phenomena alone cannot explain consciousness. The assumption here is that consciousness arises from non-physical entities such as the soul or mind and is controlled by brain activity.

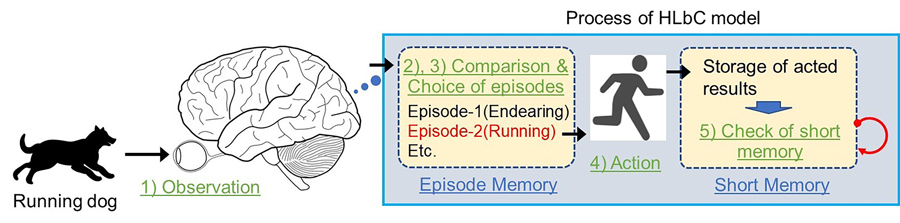

The HLbC model, however, represents will and consciousness based on human language. In this model, the human language is defined mathematically in a probability space (the set of all possible outcomes). Then, episodic memories are defined by relating sentences, actions, and emotions. While the debate about whether consciousness is a physical or non-physical entity is ongoing, Inage and colleagues have chosen to base their model on physicalist monism.

Confirming consciousness

In response to observations from all five senses, the brain unconsciously and randomly selects actions from past episodic memories and executes them. The results of these actions are stored in the short-term memory area, and this is the point at which consciousness is confirmed. The HLbC model formulates each process mathematically, defining consciousness as a physical entity. Hebishima, Arakaki, and Inage describe the stage where the brain selects actions from past episodic memories during the formulation as a ‘random selection process of episodic memory’.

As well as explaining why consciousness is generated, the HLbC model explains many phenomena occurring in psychology.

As well as explaining why consciousness is generated, the HLbC model clarifies many phenomena occurring in psychology. These include differences in conscious experience of time, and interpretation of the quantum mechanics measurement problem. The model also enables the selection process of the brain choosing actions in response to sensory input to be described by the Schrödinger equation (the fundamental equation governing the wave function of a quantum-mechanical system) as an analogy. This concurs with recent research demonstrating that the brain selects actions randomly.

The research team hopes to apply this mathematical model to psychological awareness and psychological feelings of time, for example. If this can be achieved, it is expected to be a highly versatile model of consciousness. Inage and colleagues will continue their research and hope that the proposed model will provide answers to some of the philosophical questions about consciousness.

What are the main obstacles faced by researchers attempting to solve the hard problem of consciousness?

The complexity of the consciousness model is often discussed, but it ultimately revolves around the question of whether the brain can understand itself. Within the realm of possibilities, including the proposed ideas, there exists an infinite range of concepts. From the perspective of physical monism to the idea that consciousness emerges through quantum mechanics, various intriguing notions are present.

The crucial aspect lies in how well we can replicate reality among these nearly infinite ideas, and this verification process is of utmost importance. The issue of consciousness extends beyond merely understanding how consciousness arises; it encompasses the comprehensive explanation of diverse phenomena such as psychological illusions, behavioural psychology, and even our perception of time.

Unravelling these questions is vital for deepening our knowledge and understanding. Contemporary science and philosophy actively engage in unravelling the mysteries of consciousness, with the expectation of further insights and discoveries. In our journey of exploration, we should continue to approach the essence of consciousness through diverse perspectives and approaches.

Why did you choose to base the HLbC model on the physical monism perspective?

Dualism posits that the mind and body are distinct entities and exist independently from each other. The mind refers to non-material aspects such as consciousness, thoughts, and subjective experiences, while the body is considered a physical entity. In this view, although the mind and body interact, they are fundamentally different in nature.

However, within this perspective, the exact nature of the difference between material and non-material and the location of their boundary are not clear. It is also conceivable that material and non-material can alternate at this boundary. This issue adds significant complexity to our understanding of the phenomenon of consciousness.

On the other hand, if consciousness is explained by physical monism, the aforementioned problem may be resolved. At least for us, simplicity becomes an important factor to consider.

What aspects of this ongoing research have surprised you most?

First, we attempted to simulate inverted qualia using a neural network. As a result, it was demonstrated that inverted qualia can indeed occur within a neural network. Specifically, qualia and inverted qualia were shown to arise from subtle differences in parameters within the neural network. This suggests that everyone may perceive phenomena, such as the experience of colours, with different qualia. This is a fascinating finding to consider.

On the other hand, upon further reflection, it seems more challenging to imagine mechanisms by which different brains can perceive all colours as the ‘same red’. However, we, as humans, can recognise and communicate red as a common colour (despite differences in individual perceptions) using a shared language. This proposal is based on the concepts of language and memory.

Through language and shared memories, we can establish a collective illusion of experiencing the same qualia, enabling us to function and communicate effectively without difficulty. This perspective suggests that, despite having different subjective experiences, we are still able to share a common understanding and meaning through the power of language and memory. The proposed model invites intriguing insights and encourages profound contemplation.

How does your model of consciousness manage to explain it as a philosophical concept?

According to the proposed model, behaviour in response to observation is defined as the brain randomly selecting from memories and subsequently recognising the outcome of that behaviour. This random selection is believed to depend on the brain’s physical conditions. This conclusion leads to a negative answer to the philosophically significant question, ‘Does free will exist?’ However, there is a wide-ranging debate regarding the existence of free will. The definition and understanding of free will are subjects of discussion from various perspectives, fuelling philosophical controversies. Therefore, determining the truth of this assertion requires additional evidence and further discourse.